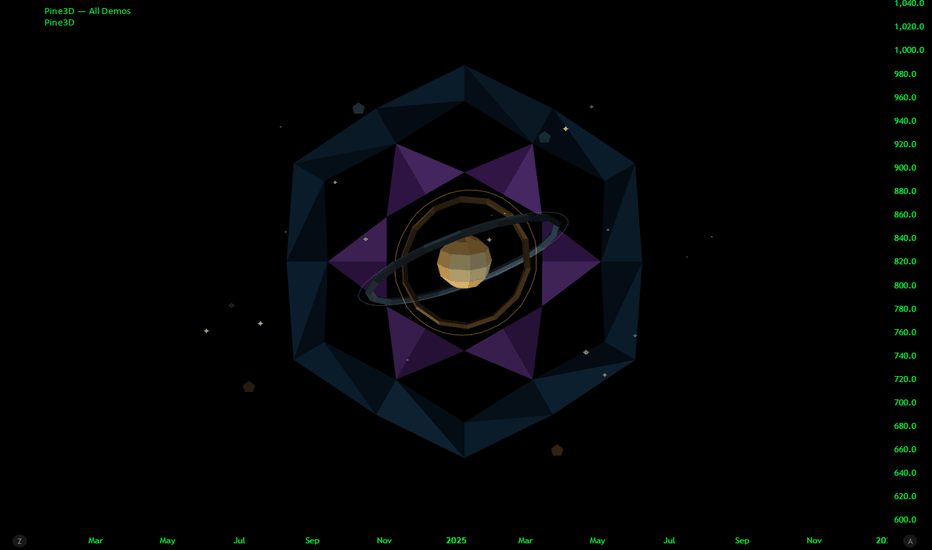

Pine3D: A Native 3D Graphical Rendering EnginePine3D is a full 3D rendering engine for TradingView, powered by Pine Script™ v6.

Pine3D pushes forward the frontier of TradingView 3D rendering capabilities, providing a fully fledged graphical engine under an intuitive, chainable, object oriented API. Build meshes, transform them in world space, light them, cast shadows, project them through a perspective camera, and render the result directly on your chart, all without ever bothering about trigonometry synchronization or optimization.

The library brings forth a streamlined process for anyone that wishes to visualize data in 3D, without needing to know anything about the complex math that has previously gatekept such indicators. Pine3D does all the heavy lifting, including extreme optimization techniques designed for production ready indicators.

The entire API is chainable and tag addressable, so spawning a mesh, registering it, pointing the camera at it, and rendering the frame is a four line affair:

Mesh mybox = cube(40.0, color.orange).setTag("hero").rotateBy(0.0, 45.0, 0.0)

scene.add(mybox)

scene.lookAt("hero")

render(scene)

🔷 SURFACES: CONTOUR BAND RENDERING

Pine Script imposes a hard ceiling of 100 polylines and 500 lines per indicator . On the surface this looks fatal for dense 3D meshes: every triangle drawn naively burns one of those 100 slots, or two of the 500, and the budget evaporates within a few hundred faces.

The conventional escape hatch is strip stitching , tracing a polyline forward along one row of a grid and back along the next, packing a ribbon of quads into a single drawing slot. It buys a meaningful multiplier, but it pays for that multiplier with two structural constraints baked into the geometry itself:

One color per strip. A polyline carries a single stroke and fill color, so every cell along the ribbon must share the same shade. The moment you want per cell lighting, contour banding, or value driven gradients, every color change forces a new polyline and the budget collapses.

One contiguous ribbon per slot. Strips can only describe topologically connected runs of cells. Disjoint regions, holes, islands, and value clustered fragments scattered across the surface each demand their own polyline.

Pine3D breaks both constraints at once.

At the core of the engine sits an innovation that redefines the limits for visual fidelity: contour band rendering using degenerate bridge stitching . The technique quantizes a surface's elevation into colored bands, then collapses every cell that falls inside the same band, no matter where it sits on the screen , into one continuous, hole aware polyline path per band, threading invisible zero width bridges between disjoint islands so that a single polyline can carry thousands of polygon equivalent fragments scattered across the geometry.

The result:

A single polyline can render up to 2,000 disconnected triangle equivalents , spread across arbitrarily separated regions of the surface.

Theoretical ceiling of around 200,000 disconnected faces inside the 100 polyline budget, a regime that strip based stitching cannot enter at any color count above one.

A 40 x 40 heightmap (around 3,000 triangles) renders inside the budget with full per band contour coloring and room to spare. Stress harnesses have run 40 x 80 grids .

Each band's path is depth sorted and near plane culled, and cached between bars , so once geometry is built only the screen space projection runs per frame.

This algorithm enables scenes with extreme detail relative to the 100 polyline limit, and shifts the optimization focus from "drawing limits" to "CPU limits", which Pine3D natively handles with aggressive caching at every layer of the pipeline. The contour technique is currently integrated into the surface() function, with the same compression strategy generalizable to any mesh class and ultimately full scene rendering in future versions.

Non-uniform grids out of the box. surface() accepts optional axisX and axisZ arrays that override the default uniform spacing with custom column and row positions. This means logarithmic strike spacing on an option volatility surface, irregular timestamp spacing on a market depth heatmap, or any other non-evenly-sampled grid renders correctly without resampling the data first. The contour band engine, axis ticks, and gridBox cage all snap to the custom positions automatically.

A full contour surface is just a handful of lines; the damped ripple below builds once and never needs updating:

//@version=6

indicator("Pine3D - Contour Surface", overlay = false, max_polylines_count = 100, max_lines_count = 500, max_labels_count = 500)

import Alien_Algorithms/Pine3D/1 as p3d

var p3d.Scene scene = p3d.newScene()

var p3d.Mesh heatmap = na

if barstate.isfirst

// Damped cosine ripple

int N = 20

matrix data = matrix.new(N, N, 0.0)

for r = 0 to N - 1

for c = 0 to N - 1

float dx = c - (N - 1) / 2.0

float dz = r - (N - 1) / 2.0

float d = math.sqrt(dx * dx + dz * dz) * 0.7

data.set(r, c, math.cos(d) * math.exp(-d * 0.12) * 50.0)

heatmap := p3d.surface(data, 200.0, color.blue, color.red, 24)

.gridBox()

.gridLabels(color.white, "X", "Amplitude", "Z")

scene.add(heatmap)

scene.camera.orbit(35.0, 25.0, 380.0)

if barstate.islast

p3d.render(scene, lighting = true)

🔷 TRAIL3D: STREAMED OSCILLATOR PATHS

Trail3D is a first class streaming primitive built for visualizing two correlated time series as a 3D ribbon evolving through time. You give it a rolling buffer capacity and push (u, v) samples bar by bar; the primitive maintains the buffer, builds the ribbon geometry, and renders it inside a normalized bounding cube so the path always fits cleanly in view regardless of the underlying data range.

Under the hood, Trail3D is a coordinated bundle of polylines: one for the main ribbon, two for optional shadow projections onto the back wall and floor, and one for the wireframe cage. All four are depth sorted and occlusion clipped against the rest of the scene, and the primitive auto normalizes incoming samples against the rolling window's min/max so streaming data always fills the cube without manual scaling.

This enables a class of visualizations that would otherwise require dozens of polylines and manual buffer management: phase space portraits, Lissajous figures, oscillator pair correlations, attractor trajectories, and any "two indicators evolving together over time" study. The demo above shows a sine and cosine pair pushing samples each bar to trace a clean spiral inside the cage, the same pattern you would use to plot RSI vs MFI, momentum vs volatility, or any custom (u, v) signal pair.

A full streamed scene is a handful of lines:

//@version=6

indicator("Pine3D - Trail3D", overlay = false, max_polylines_count = 100, max_lines_count = 500, max_labels_count = 500)

import Alien_Algorithms/Pine3D/1 as p3d

var p3d.Scene scene = p3d.newScene()

var p3d.Trail3D trail = na

if barstate.isfirst

trail := p3d.trail3D(220.0, 200, color.yellow)

.cage(true)

.axisLabels("sin", "cos", color.white)

trail._uProj.col := #00ffff69

trail._vProj.col := #ff00ff71

scene.add(trail)

scene.camera.orbit(215.0, 20.0, 360.0)

float phase = bar_index * 0.15

float sinX = math.sin(phase) * 100.0

float cosY = math.cos(phase) * 100.0

if barstate.isconfirmed

trail.pushSample(sinX, cosY)

p3d.render(scene)

🔷 BARS3D: CATEGORICAL 3D BAR CHARTS

bars3D() turns any series of values into a fully lit, depth sorted 3D bar chart in a single call. Each bar is height mapped to its value, color graded between a low and high color, and packed into one combined mesh with per bar depth grouping so individual bars sort correctly even inside the merged geometry. The companion updateBars() mutator refreshes heights, colors, and labels in place every bar without rebuilding geometry, making it suitable for live rankings, rolling windows, and animated comparisons.

The chainable barLabels(catNames, valNames) helper attaches category labels at the base of each bar and value labels at the top, both depth sorted with the rest of the scene. Category labels are set once at build time, while value labels can be passed to updateBars(values, valLabels = ...) each frame to reflect live data. Combined with wireGrid() for the floor and a contour surface() in the background, bars3D() becomes the centerpiece of dashboards comparing assets, sectors, timeframes, or any categorical metric.

Negative values are handled automatically: bars below zero extrude downward from the base plane with reversed face winding, so signed series like PnL, delta, or momentum histograms render correctly without any extra setup.

A complete labeled bar chart is just a few lines:

//@version=6

indicator("Pine3D - Bars3D", overlay = false, max_polylines_count = 100, max_lines_count = 500, max_labels_count = 500)

import Alien_Algorithms/Pine3D/1 as p3d

var p3d.Scene scene = p3d.newScene()

var p3d.Mesh bars = na

array values = array.from(volume - volume , volume - volume , volume - volume , volume - volume , volume - volume , volume - volume )

array names = array.from("ΔV0", "ΔV-1", "ΔV-2", "ΔV-3", "ΔV-4", "ΔV-5")

if barstate.isfirst

bars := p3d.bars3D(values, 30.0, 30.0, 10.0, color.blue, color.red, 200.0)

.barLabels(names)

scene.add(bars)

p3d.wireGrid(scene, 300.0, 300.0, 6, 6, color.new(color.gray, 80))

scene.camera.orbit(215.0, 25.0, 360.0)

if barstate.islast

bars.updateBars(values)

p3d.render(scene, lighting = true)

Omitting valLabels in updateBars() tells the engine to auto format each numeric value via str.tostring() . Pass valLabels only when you need custom strings.

🔷 SCATTER CLOUDS: POINTS IN 3D SPACE

Pine3D treats scatter clouds as a first class use case without needing a dedicated scatter API. Because Label3D is the primitive and scene.add(array) is a single batch operation, you can scatter up to 500 points anywhere in 3D space, each with independent color, symbol, size, and tooltip , and have them depth sorted and occlusion clipped against the rest of the scene automatically.

Each point is a fully addressable Label3D with mutable fields. You can change position , textColor , bgColor , labelStyle (any label.style_* glyph including circles, squares, diamonds, triangles, crosses, arrows, flags), labelSize (any size.* preset), and text per point per bar. The renderer reads these mutations every frame, so animation is just direct field assignment.

This unlocks a wide class of visualizations: clustered data scatter, K means visualizations, particle systems, parametric surfaces sampled as point clouds, gradient colored attractors, multi class classification overlays, and structured curves like the demo above. The double helix demo plots two intertwined parametric strands as ~500 points with alternating colors and per point sizing, all inside the standard scene.add(array) pipeline.

The pattern is straightforward: build the array once in barstate.isfirst , add it to the scene, then mutate point fields per bar to animate.

//@version=6

indicator("Pine3D - Scatter Cloud", overlay = false, max_polylines_count = 100, max_lines_count = 500, max_labels_count = 500)

import Alien_Algorithms/Pine3D/1 as p3d

var p3d.Scene scene = p3d.newScene()

var array points = array.new()

if barstate.isfirst

for i = 0 to 499

p3d.Vec3 pos = p3d.vec3(0.0, 0.0, 0.0)

points.push(p3d.Label3D.new(position = pos, txt = "•"))

scene.add(points)

scene.camera.orbit(35.0, 20.0, 400.0)

if barstate.islast

for i = 0 to points.size() - 1

float t = i * 0.05 + bar_index * 0.01

p3d.Label3D pt = points.get(i)

pt.position := p3d.vec3(80.0 * math.cos(t), i * 0.4 - 100.0, 80.0 * math.sin(t))

pt.textColor := i % 2 == 0 ? color.aqua : color.fuchsia

p3d.render(scene)

----------------------------------------------------------------------------------------------------------------

🔷 TWO LAYER ARCHITECTURE

Pine3D ships as a clean, two layer library:

🔸 Layer 1 - DIY API. First principle building blocks ( Vec3 , Mesh , Camera , Light , Scene , plus world space overlay primitives) for total creative control. Author your own geometry, camera behavior, lighting setup, and scene graph from scratch.

🔸 Layer 2 - High Level Helpers. Production ready wrappers like surface() , bars3D() , trail3D() , updateBars() , updateSurface() , sphere() , torus() , cylinder() , and wireGrid() , plus chainable contour helpers gridBox() and gridLabels() that wrap the primitives into a few lines of code. Scatter clouds use the standard Label3D primitive directly.

The object model is chainable and scene oriented, so complex setups still read cleanly.

🔷 FEATURE LIST

Contour Surface Rendering - The most powerful 3D surface engine ever released for Pine Script. Render tens of thousands of polygon equivalent faces using a single polyline per contour band, delivering smooth, continuous terrain with natural ridges and valleys.

Adaptive Rail Sharing - Solid meshes drawn with the default linefill backend reuse one edge line between adjacent coplanar faces, averaging roughly 1.6 lines per face instead of the naive two, pushing practical mesh capacity up to ~360 faces depending on topology.

Interior Face Culling on Merge - mergeMeshes(meshes, removeInterior = true) detects coincident faces with opposing normals and strips them, so voxel style scenes (stacked cubes, block walls, lattice geometry) ship only their exterior shell and spend no budget on hidden interior faces.

True Perspective Camera System - Full 3D camera with position, target, fov, and orbit() controls. Supports cinematic camera movement, lookAt by mesh tag, and realistic depth.

Real Time Lighting and Shadows - Directional and point lights with configurable ambient, shadow strength, self shadowing, and a spatial grid acceleration structure for fast shadow queries.

High Performance Update System - updateSurface() and updateBars() let you animate massive datasets bar by bar without rebuilding geometry, keeping CPU usage minimal.

Rich Primitive Library - Cubes, cuboids, spheres, cylinders, tori, pyramids, planes, discs, circles, custom meshes, and the groundbreaking bars3D() with automatic labels.

Streamed Trail Primitive - trail3D() maintains a rolling buffer of (u, v) samples and renders them as a 3D ribbon inside a bounding cube, with optional projections onto the back wall and floor and a wireframe cage.

Depth Sorted Overlays - 3D labels, lines, polylines, wire grids, and trails, all correctly occluded and painter sorted against the rest of the scene.

Professional Contour Helpers - gridBox() and gridLabels() automatically add clean bounding boxes and axis titles, ticks, and series names that refresh on every updateSurface() call.

Tag Based Scene Graph - Every Mesh , Label3D , Line3D , and Polyline3D can carry a string tag. Scene exposes getMesh() , getLabel() , getLine() , getPolyline() , lookAt() , and remove() by tag, turning your scene into a lookup by name graph instead of an index juggling exercise.

Chainable, Intuitive API - Everything is designed for maximum readability and speed of development. Build complex scenes in just a few lines.

Production Ready Optimizations - World vertex caching, view projection caching, face preprocessing cache, shadow grid cache, and contour geometry cache, all managed automatically.

----------------------------------------------------------------------------------------------------------------

🔷 THE RENDERER

Every frame is produced by a single call to render(scene, ...) . The renderer runs the full pipeline: world transform, camera transform, back face culling, occlusion culling, depth sort, directional or point lighting with shadows, and perspective projection.

⚠ render() clears the entire chart drawing pool at the start of every call - every polyline , line , label , and linefill on the chart is deleted before Pine3D redraws, not just the ones it created. If you mix Pine3D with manual label.new() , line.new() , or similar calls, those drawings must be emitted after render() or they will be wiped every frame.

🔸 Setup Requirements. Pine3D consumes polylines, lines, and labels simultaneously, so your indicator() declaration must raise all three budgets, and the library must be imported under an alias:

indicator("My 3D Scene", overlay = false,

max_polylines_count = 100,

max_lines_count = 500,

max_labels_count = 500)

import Alien_Algorithms/Pine3D/1 as p3d

🔸 render() parameters.

maxFaces (int, default 100). Hard cap on solid faces drawn per frame. Contour bands, wireframe edges, labels, lines, and overlay polylines are not counted against this cap, and are bounded only by TradingView's global 100 polyline / 500 line / 500 label budgets.

culling (bool, default true). Enable back face culling.

lighting (bool, default false). Enable diffuse shading. Reads scene.light if set; otherwise falls back to the render() args.

lightDir (Vec3). Overrides scene.light.direction when provided. Points toward the light.

ambient (float, default 0.3). Minimum brightness for shadowed faces (0.0-1.0).

wireframe (bool, default false). Force outline only output for the entire scene.

occlusion (bool, default true). Sparse raster pass that drops hidden faces before drawing. Major perf win on dense scenes.

occlusionRaster (int, default 768). Raster resolution of the occlusion buffer. Lower = faster but coarser; higher = stricter hidden face rejection.

Explicit render() args always win over scene.light , which makes render() the right place for ad hoc, per frame lighting tweaks.

----------------------------------------------------------------------------------------------------------------

🔷 MESH DRAWING MODES

Two independent axes control how a mesh appears on the chart:

🔸 Style (via mesh.setStyle(...) ) - what gets drawn:

"solid" . Filled faces. Default.

"wireframe" . All edges, no fill. Shows interior geometry.

"wireframe_front" . Only front facing edges. Cleaner silhouette for convex meshes.

🔸 Draw Mode (via mesh.drawMode ) - which TradingView primitive carries the solid faces:

"linefill" (default). Uses the line and linefill budgets. An adaptive rail sharing optimization reuses one edge line between adjacent coplanar faces, pushing practical capacity up to ~360 faces per mesh depending on topology. Supports in place updates via updateSurface() and updateBars() . Rails are drawn transparent, so solid faces in this mode have no visible outline - use a wireframe style or "poly" drawMode if you need stroked edges. Recommended for all new code.

"poly" . Legacy polyline backend. Capacity ~100 faces, no in place updates, but renders the face outline using mesh.lineStyle and mesh.lineWidth . Use only when you need styled solid face outlines.

Wireframe styles always render with line primitives regardless of drawMode. Stroke width and style on edges (and on poly mode face outlines) come from mesh.lineWidth and mesh.lineStyle , which you mutate by direct field assignment.

----------------------------------------------------------------------------------------------------------------

🔷 QUICK START

The best practice lifecycle is simple:

Create one persistent Scene with newScene() .

Build meshes and helper overlays once in barstate.isfirst .

On later bars, mutate objects in place with transforms or helper mutators like updateBars() and updateSurface() .

Call render(scene, ...) once per frame. It automatically clears the previous chart drawings.

A complete, lit, animated 3D scene is still a handful of lines:

//@version=6

indicator("My First 3D Scene", overlay = false, max_polylines_count = 100, max_lines_count = 500, max_labels_count = 500)

import Alien_Algorithms/Pine3D/1 as p3d

var p3d.Scene scene = p3d.newScene()

var p3d.Mesh sun = na

if barstate.isfirst

scene.setLightDir(1.0, -1.0, 0.5).setAmbient(0.3)

sun := p3d.sphere(50.0, 16, 12, color.orange).setTag("sun")

scene.add(sun)

p3d.wireGrid(scene, 300.0, 300.0, 6, 6, color.new(color.gray, 80))

scene.camera.orbit(35.0, 25.0, 220.0)

if barstate.islast

sun.rotateBy(0.0, 1.5, 0.0)

p3d.render(scene, lighting = true)

----------------------------------------------------------------------------------------------------------------

🔷 RECOMMENDED USAGE PATTERN

Use your Scene and major meshes in var .

Build geometry once in barstate.isfirst .

Use updateSurface() and updateBars() on later bars instead of rebuilding meshes.

Use scene level helpers like wireGrid() when you want overlays added immediately.

Use trail3D() when you want a streamed oscillator style path with built in wall projections and cage geometry.

For scatter clouds, build an array once, hand it to scene.add(pts) , then mutate pt.position , pt.textColor , etc. each bar to animate.

Use mesh level gridBox() and gridLabels() (contour) and barLabels() (bars) to attach overlays to the mesh setup chain. They are drained into the scene by scene.add(mesh) .

🔷 CONSIDERATIONS

scene.clear() vs render(). scene.clear() removes objects from the scene graph (meshes, labels, lines, polylines). render() only clears the previous frame's TradingView drawings and redraws from the current scene graph. You almost never need scene.clear() in the build once and update pattern.

Global scope series for updateSurface() / updateBars(). If your data uses Pine's history operator ( ) or calls functions like ta.rsi() , ta.atr() , request.security() , those must be declared at global scope so Pine tracks their bar by bar history. Calling them inside barstate.islast produces inconsistent results or compiler errors.

gridLabels() tick values auto refresh. When you call updateSurface() , any tick value labels created by gridLabels() are automatically updated to reflect the new data range. Axis titles and positions stay constant. You don't need to rebuild them.

barLabels() value labels via updateBars(). Create category labels once with mesh.barLabels(catNames) at build time, then pass valLabels to updateBars() on each frame. Value labels are refreshed automatically. Don't call barLabels() again.

Lighting convenience methods are chainable. scene.setLightDir() , setLightPos() , setLightMode() , setAmbient() , setShadowStrength() , and showLightSource() all return Scene and can be chained: scene.setLightMode("point").setLightPos(0, 200, 150).setAmbient(0.25) .

Mesh transforms return Mesh. moveTo() , moveBy() , rotateTo() , rotateBy() , scaleTo() , scaleUniform() , setTag() , setStyle() , setColor() , show() , hide() all return Mesh for chaining: mesh.moveTo(0, -20, 0).rotateTo(0, 45, 0).setStyle("solid") .

Degrees vs radians. rotateTo() and rotateBy() on Mesh expect degrees. The low level Vec3.rotateX/Y/Z() methods expect radians.

scene.lookAt() is tag only. scene.lookAt(t) accepts a string tag and points the camera at that mesh. To aim the camera at an arbitrary Vec3 , call scene.camera.lookAt(vec) directly.

remove(tag) removes one object. The search order is meshes, then labels, then lines, then polylines, and the first hit wins. Avoid reusing tags across primitive types if you intend to delete by tag.

Shadow grid acceleration is directional light only. The spatial shadow grid is only built when lightMode == "directional" . Point lights fall back to a linear O(M) scan, so heavy shadow scenes are fastest in directional mode.

guiShift and yOffset. scene.guiShift and scene.yOffset position the 3D viewport on the chart without consuming historical bar slots. Increase guiShift to push the scene rightward into future bar space; adjust yOffset to slide it vertically in price units.

bar_time projection. All chart drawings are emitted with xloc.bar_time , so the scene can sit arbitrarily far left or right of bar_index without forcing Pine to extend its history buffer. This is what keeps the engine stable on long charts and future projected scenes.

barLabels() without values. When you call mesh.barLabels(catNames) and omit value labels, every later updateBars(values) auto formats the numeric values via str.tostring() . Pass valLabels only when you need custom strings.

Direct mesh.vertices mutation requires invalidateCache(). Transform mutators ( moveTo , rotateBy , scaleTo , etc.) invalidate the world vertex cache on their own. Only raw index writes like mesh.vertices.set(i, newVec) need a manual mesh.invalidateCache() call to force re-projection. Skipping it will make the renderer draw stale geometry.

Drawing budgets fail silently. If a scene emits more than 100 polylines, 500 lines, or 500 labels in a single frame, TradingView silently drops the overflow without raising a runtime error. Missing geometry almost always means a budget overrun - lower maxFaces , drop a contour level, or simplify overlay primitives to bring the frame back inside the caps.

render() deletes non Pine3D drawings too. Every render() call clears polyline.all , line.all , label.all , and linefill.all before redrawing. Any manual label.new() , line.new() , etc. issued before render() in the same frame will be wiped. Issue custom drawings after the render call if you need them to persist.

mergeMeshes() preserves depth grouping. When every source mesh passed into mergeMeshes() has the same vertex and face count (e.g. identical primitives in a voxel grid), the merged mesh auto derives depth group boundaries so the combined geometry still sorts correctly per original instance. Mixing primitives with different topologies disables the grouping.

CPU timeouts: knobs to turn. Pine Script enforces a per bar execution budget, and dense scenes can trip it before the drawing budget ever does. If a scene compiles but times out at runtime, reach for these levers in order: lower occlusionRaster (e.g. 768 -> 384) for the biggest single perf win, reduce maxFaces to cap the solid face pool, drop levels on contour surfaces, simplify sphere/torus segment counts, and gate heavy work behind barstate.islast so history bars only build geometry rather than render it.

----------------------------------------------------------------------------------------------------------------

🔷 MORE EXAMPLES

The following scenes were all built entirely in Pine Script™ v6 using Pine3D as the rendering layer. They exist to demonstrate that the library is a real engine capable of complex, production grade visualizations.

🔸 4D Hypercube (Tesseract). A rotating tesseract, projected from 4D to 3D to 2D in real time using a custom 4D rotation matrix layered on top of Pine3D's standard projection pipeline.

🔸 Solar System. Following the publication of my 3D Solar System back in 2024, which introduced new graphical rendering concepts into Pine Script, we have seen a wave of various interpretations of the underlying vector classes, ranging from tutorials to niche specific integrations using hardcoded math. It became clear that a unified architecture was needed, one that would lower the barrier to entry while simultaneously handling the optimization process, which is both complex and error prone to do manually.

That architecture is what Pine3D delivers. Below is a re-creation of the classic 3D Solar System rebuilt entirely on top of the library. It uses a fraction of the original code , renders roughly 5x faster , and adds real lighting cast directly from the Sun , all while consuming only a third of the available drawing budget thanks to the occlusion and culling mechanisms Pine3D handles out of the box.

----------------------------------------------------------------------------------------------------------------

🔷 API REFERENCE

🔸 Top Level Entry Points. newScene() creates a ready to use Scene with a default camera and light. render(scene, ...) draws the current frame and auto clears the previous frame's chart drawings; see the Renderer section above for the full parameter list. vec3(x, y, z) creates a Vec3. colorBrightness() is an exported color utility helper.

🔸 Mesh Factories.

Primitives - cube() , cuboid() , pyramid() , plane() , sphere() , cylinder() , torus() , grid() , disc() , circle() for ready made geometry.

customMesh(verts, faces) - Low level escape hatch for authoring your own topology.

mergeMeshes(meshes, tag, removeInterior) - Bakes transforms and combines many meshes into one. With removeInterior = true , coincident faces with opposing normals (e.g. shared walls between adjacent cubes in a grid) are culled so only the exterior shell survives, a major optimization for dense voxel style scenes.

surface(heights, size, lowCol, highCol, levels, axisX, axisZ) - Creates a contour surface mesh.

bars3D(values, barWidth, barDepth, spacing, lowCol, highCol, maxHeight) - Creates a combined 3D bar chart mesh; add labels with the chainable barLabels(names, values) method.

🔸 UDT Constructors. Overlay primitives and face descriptors are plain UDTs. Because these types have many fields, always instantiate them with named arguments rather than positional, e.g. Label3D.new(position = pos, txt = "•") :

Face - fields: vi (array of vertex indices into the parent mesh), col . Used when authoring customMesh() topology; every face must have at least 3 indices and should be planar.

Label3D - fields: position , txt , textColor , bgColor , labelStyle , labelSize , fontFamily , tooltip , visible , tag . Only position is required.

Line3D - fields: start , end , col , width , visible , tag , lineStyle .

Polyline3D - fields: points , col , fillColor , width , closed , visible , tag , lineStyle .

Vec3.new(x, y, z) or the vec3(x, y, z) shorthand.

🔸 Trail Primitive. trail3D(size, capacity, trailCol, minSamples) creates a streamed Trail3D primitive with a main trail, two projection polylines, and a cage polyline. capacity is internally clamped to 300 samples to keep the rolling buffer inside Pine's execution budget; passing a larger value silently resolves to 300. minSamples (default 60) is the sample count at which the cage reaches its full cube width: below that the cage stays cube shaped and samples stretch across it; above that the cage grows rightward at a fixed step until capacity is hit. scene.add(trail) registers the sub primitives into the scene. Trail3D methods: pushSample() , axisLabels() , cage() , moveTo() , show() , hide() .

🔸 Mesh Methods.

Transform - moveTo() , moveBy() , rotateTo() , rotateBy() , scaleTo() , scaleUniform() .

Appearance - setColor() , setFaceColor() , setStyle() , show() , hide() , setTag() .

Stroke styling (direct) - mesh.lineWidth := 3 and mesh.lineStyle := line.style_dashed control width and style of every visible mesh edge in wireframe modes and the outline of solid faces in drawMode = "poly" .

Shadow opt out (direct) - mesh.castShadow := false excludes the mesh from shadow casting while still receiving light. Useful for ghost overlays, debug geometry, or semi transparent meshes you do not want occluding the scene.

Lifecycle - clone() , faceCount() , invalidateCache() .

Data mutation - updateSurface() and updateBars() refresh persistent meshes in place. updateBars() refreshes any bar label positions automatically; pass catLabels / valLabels to also update the text.

Contour helpers - gridBox() and gridLabels() queue overlays on the mesh and hand them to the scene when you call scene.add(mesh) .

Bar helpers - barLabels() is chainable on a bars3D() mesh and queues its category and value labels for the next scene.add(mesh) .

Note: rotateTo() and rotateBy() expect degrees. The low level Vec3.rotateX/Y/Z() methods work in radians.

🔸 Scene Methods.

Lighting - setLightDir() , setLightPos() , setLightMode() , setAmbient() , setShadowStrength() , showLightSource() .

Scene graph - add(mesh) , add(label) , add(array) , add(line) , add(polyline) , add(trail) , remove(index) , remove(tag) , clear() .

Lookup and navigation - getMesh() , getLabel() , getLine() , getPolyline() , lookAt() , totalFaces() .

Cache control - invalidateLightCache() after mutating light direction or scene bounds externally; invalidateAllCaches() to also invalidate every mesh's world vertex cache (use after directly mutating mesh.vertices ).

Note: scene.clear() clears the scene graph itself. render() only clears the previous frame's TradingView drawings.

🔸 Camera Methods. setPosition(x, y, z) moves the camera. lookAt(x, y, z) / lookAt(vec3) points at a world space target. orbit(angleX, angleY, distance) does a spherical orbit around the current target. setFov(val) sets the perspective scale factor. Camera fields ( position , target , fov ) are also directly mutable via assignment when you need to tune them outside the provided setters, e.g. scene.camera.fov := 1200.0 .

🔸 Light Field Mutation. In addition to the scene level convenience setters, every field on scene.light is directly mutable for fine grained tuning: scene.light.selfShadow := true enables self shadowing, scene.light.shadowBias := 0.2 adjusts the shadow acne offset, scene.light.shadowStrength and scene.light.ambient are also exposed. Mutate them after newScene() or between frames; the renderer reads them every call.

🔸 Vec3 Methods. Core math: add() , sub() , scale() , negate() , dot() , cross() , length() , normalize() , distanceTo() , lerp() . Rotation and helpers: rotateX() , rotateY() , rotateZ() , copy() , toString() .

🔸 Overlay Primitive Methods.

Label3D - moveTo() , moveBy() , setText() , setTextColor() , setTooltip() , show() , hide() , setTag() .

Line3D - setStart() , setEnd() , setPoints() , setColor() , show() , hide() , setTag() .

Polyline3D - setColor() , show() , hide() , setTag() .

Every UDT field is mutable via direct assignment for properties without a chainable setter:

Label3D - bgColor , labelStyle (label.style_*), labelSize (size.*), fontFamily (font.family_*), visible .

Line3D - width , lineStyle (line.style_solid / _dashed / _dotted / _arrow_left / _arrow_right / _arrow_both), visible .

Polyline3D - width , lineStyle (line.style_solid / _dashed / _dotted only; arrow styles are not supported by TradingView's polyline primitive), fillColor , closed , visible .

Mutations are read per frame by the renderer, so they animate freely.

🔸 High Level Scene Helpers. wireGrid(scene, w, d, divX, divZ, col) adds a depth sorted ground grid. scene.add(array) adds a batch of labels in one call - the idiomatic way to push a scatter cloud into the scene.

🔸 Mesh Level Chainable Overlays. mesh.barLabels(names, values, ...) adds category and value labels on a bars3D() mesh. mesh.gridBox(col, divs) adds a wireframe bounding box cage on a surface() mesh. mesh.gridLabels(col, xName, yName, zName, ticks, fmt) adds axis titles and tick value labels on a surface() mesh; tick values auto refresh on updateSurface() . All three are queued on the mesh and drained into the scene by scene.add(mesh) .

----------------------------------------------------------------------------------------------------------------

This work is licensed under (CC BY-NC-SA 4.0) , meaning usage is free for non-commercial purposes given that Alien_Algorithms is credited in the description for the underlying software. For commercial use licensing, contact Alien_Algorithms

Indicators and strategies

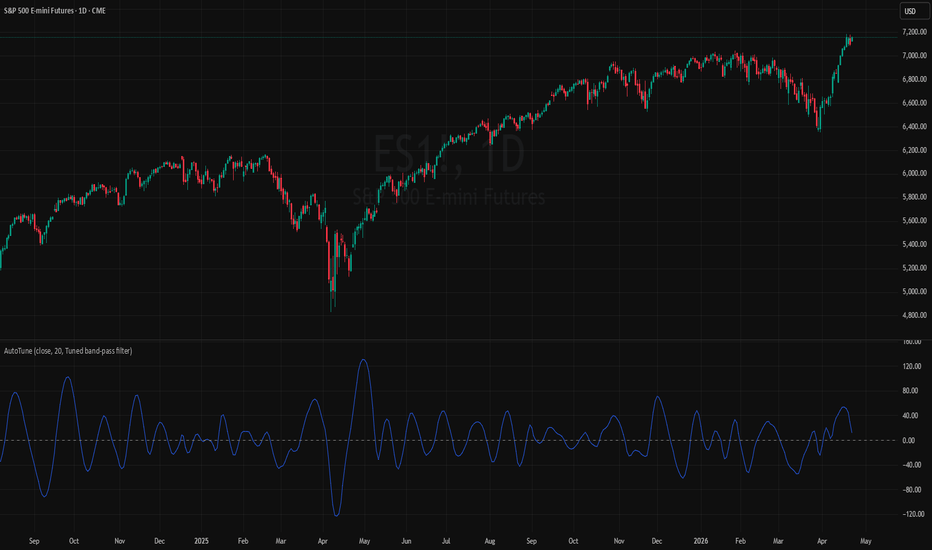

TASC 2026.05 The AutoTune Filter█ OVERVIEW

This script implements the AutoTune Filter described by John F. Ehlers in the article "A Rolling Autocorrelation Function" from the May 2026 edition of the TASC Traders' Tips . The script analyzes rolling autocorrelation in filtered price data to calculate a band-pass filter that dynamically adjusts to apparent dominant cycles.

█ CONCEPTS

Autocorrelation function (ACF)

Autocorrelation measures the correlation of a time series with a lagged version of itself. The autocorrelation function (ACF) evaluates autocorrelation across a range of lags to gauge the extent to which values in a series vary jointly with previous values at different offsets.

The ACF can help traders identify patterns and trends in stochastic market data, characterize long-range dependence in a series, and more. In his article, Ehlers explains how the ACF can serve as a "bridge" between analysis in the time and frequency domains for identifying dominant cycles in market data.

Ehlers notes that at low lags, such as one bar, the autocorrelation in price data tends to be very high because prices don't often change dramatically from one bar to the next. As the lag increases, autocorrelation often decreases, reaching near zero for offsets at which the latest prices do not show a clear relationship with past prices.

However, he also observed that at specific lags, anticorrelation (negative correlation) can emerge, where the current values in the series move in one direction while past values move in the opposite direction. Based on this observation, he suggests that a lag with strong anticorrelation can indicate a significant cycle in the market data, where the cycle length is twice that of the analyzed lag.

To understand why this behavior can indicate significant cycles, consider a sine wave that completes a full oscillation every 20 bars. If the series is currently moving up, it will then move down 10 bars later, and then complete the cycle by moving up again 10 bars after that. The ACF of that sine wave returns a value of -1 for a lag of 10 bars, but not for other lower lags or higher lags up to 20.

In other words, a pure sine wave with a given period has perfect anticorrelation with a delayed version of itself that is offset by half of that period.

While market data does not typically behave like a pure sine wave, the same underlying principle applies: if the current prices exhibit a strong anticorrelation with previous prices at a given offset, a dominant cycle with a length of twice that offset is likely present in the current data.

AutoTune Filter

Ehlers proposes that traders can use the dominant cycle obtained via autocorrelation to set the critical period of a filter. Tuning a filter to respond most strongly to the measured cycle may promote more consistency in time alignment and help reduce destructive phase shifts.

He demonstrates one such implementation with his AutoTune Filter, an adaptive band-pass filter whose center period dynamically increments toward the dominant cycle calculated from an ACF over a given window.

The steps to calculate the AutoTune Filter are as follows:

Apply a two-pole high-pass filter to the series to reduce the effect of low-frequency (long-period) cycles on the autocorrelation calculation. The filtered series emphasizes cycles with lengths up to the specified cutoff period, and attenuates all others.

Compute the rolling ACF of the filtered data across the same window length as the filter's cutoff period.

Check the autocorrelation for each lag period, and identify the smallest lag with the lowest autocorrelation value. Multiply that lag by two to obtain the dominant cycle for the analyzed window.

If the difference between the current and previous dominant cycle is greater than two, limit the result for the current bar to two greater or less than the previous cycle's value to prevent large, sudden shifts in the filter's center period.

Finally, compute a band-pass filter using the value from step 4 as the center period.

█ USAGE

This indicator includes four display modes to visualize the AutoTune Filter's calculations:

"High-pass filter" : Plots the high-pass filtered data that the script analyzes for autocorrelation calculations.

"Min. correlation" : Plots the lowest autocorrelation value calculated for the filtered series over the analyzed window.

"Dominant cycle" : Plots the dominant cycle value that the final filter uses for its center period.

"Tuned band-pass filter" (default): Plots the final band-pass filtered result, i.e., the AutoTune filter.

Ehlers suggests that traders can identify peaks and valleys in prices for potential mean reversion signals by analyzing the rate of change in the tuned band-pass filter. If the rate of change is zero, the current price might be near a local high if the filter's value is positive, or near a local low if the value is negative.

Users can analyze the additional outputs to gain further insight into the filter's behaviors, and they can pass these plotted values to other scripts via source inputs for easy use in other custom calculations.

█ INPUTS

The indicator includes the following inputs in the "Settings/Inputs" tab:

Source: The series of values to process.

Window: The window length of the ACF calculation, and the cutoff period of the high-pass filter. The maximum possible dominant cycle length is two times this value.

Output: One of the four display modes ("High-pass filter", "Min. correlation", "Dominant cycle", or "Tuned band-pass filter").

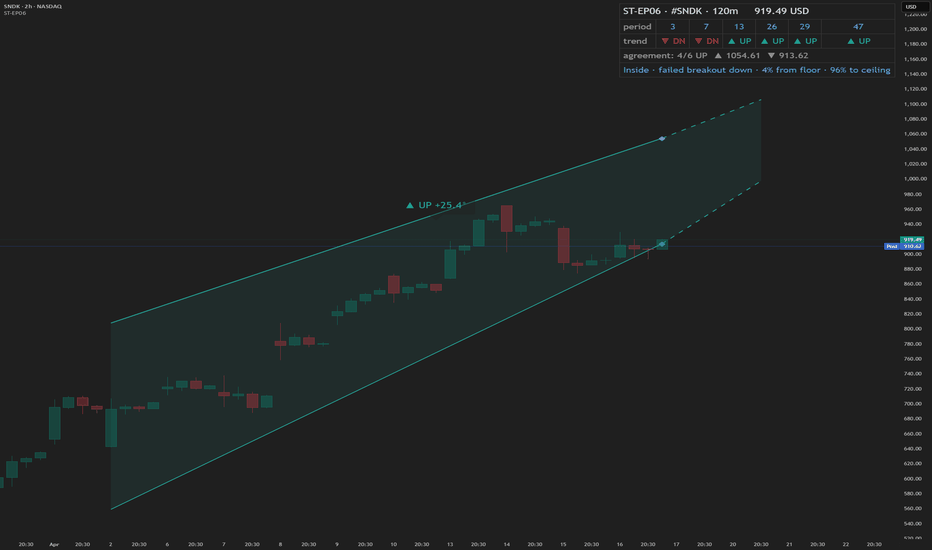

Smart Trader, Episode 06, Isotropic Trend Lines🔷 WHAT IS ST-EP06 — ISOTROPIC TREND LINES?

ST-EP06 is a multi-scale structural trend channel indicator built on a σ-normalized coordinate system. It is designed to solve one of the oldest unaddressed problems in technical analysis:

trend angles that cannot be compared across instruments, timeframes, or volatility regimes.

A trend line drawn on a chart appears to carry a measurable angle — yet that angle is an artifact of the display window, not a property of the market. Resize the chart horizontally and the slope flattens; compress it and the slope steepens. A given price movement on Gold daily and Bitcoin 1-hour may produce visually identical slopes on screen while reflecting entirely different structural conditions. This happens because traditional charts use a coordinate space where the vertical axis (price) and the horizontal axis (time) share no fixed dimensional relationship.

The consequence is not merely cosmetic. A trader cannot meaningfully compare the steepness of a trend on one instrument with another — or even across timeframes on the same instrument — because the weight of "one unit of price per bar" varies with the instrument's current volatility.

As the author of this indicator, I sought a coordinate system where trend angles would be an intrinsic structural property of the market, independent of charting software or display settings. The goal: a space where a 30° uptrend on EUR/USD weekly carries the same structural meaning as a 30° uptrend on NASDAQ 5-minute — indicating that each market is moving at the same rate relative to its own realized volatility.

The solution draws on the principle of dimensional analysis, well established in physics and engineering. Just as the Reynolds number normalizes fluid flow to make behavior comparable across different pipe sizes and fluid viscosities, this indicator normalizes price movement by realized volatility, producing a dimensionless space we call the Isotropic Coordinate System (ICS).

In ICS, price is expressed in natural logarithmic form and scaled by a volatility estimate (σ) derived from the Yang-Zhang (2000) method — a drift-invariant estimator that incorporates Open, High, Low, and Close data. The resulting vertical axis is dimensionless: one unit equals one standard deviation of recent realized price behavior. When trend angles are measured in this space, 45° indicates approximately one σ of movement per bar — whether the chart shows a penny stock, a major currency pair, or a commodity index.

Traditional chart coordinates assign no fixed relationship between the price axis and the time axis. Resizing the chart window changes the visual slope of the same price movement — a compressed view may show 52° while a stretched view of the same data shows 25°. The angle is a display artifact, not a market property. The Isotropic Coordinate System (ICS) addresses this by normalizing log-price by realized volatility (σ). In this space, the trend angle is designed to remain constant regardless of how the chart is displayed — because it measures price displacement in units of σ per bar, not in pixels per pixel.

🔷 HOW THE MODULES WORK TOGETHER

ST-EP06 operates as a deterministic pipeline where each stage consumes the output of the one before it:

Realized volatility estimation (σ) → Structural block construction → Monotonic direction detection → ICS angle measurement → Channel boundary fitting → Six-scale parallel analysis → Consensus aggregation → Breakout and retest state tracking → Dashboard narrative generation

The Yang-Zhang σ provides the normalization constant for every downstream computation. Price history is then partitioned into structural blocks, each distilled to a single central tendency that resists close-price bias. Consecutive block centers are compared to identify the longest uninterrupted directional segment. The slope of that segment, measured in σ-normalized space, yields the ICS angle. Four price extremes located within the segment define two log-linear channel boundaries. This complete pipeline runs independently at six temporal scales, and their independent outputs are aggregated into a structural consensus. A finite-state machine then tracks the evolving relationship between price and the primary channel — breakout, retest, confirmation, or failure — and translates it into a single-line human-readable narrative.

ST-EP06 operates as a deterministic sequential pipeline. Yang-Zhang volatility (σ) provides the normalization constant that flows into every downstream stage. Price history is partitioned into structural blocks, each reduced to a geometric mean. The longest monotonic segment determines direction, and its slope in σ-normalized space yields the ICS angle. Four price extremes define the channel boundaries. This complete pipeline runs independently at six scales — 3, 7, 13, 19, 29, and 47 bars per block — all prime numbers, chosen to minimize harmonic overlap so that multiple scales are unlikely to lock onto the same cyclical artifact. Scale 19 (highlighted) serves as the primary engine: it is the only scale that maps to the user's Trend Block Period input, and the only scale whose output drives the chart-overlay channel lines, the projection, the diamond markers, and the breakout/retest state machine. The other five scales operate at fixed periods and contribute exclusively to the cross-scale consensus count — providing structural context that a single scale cannot offer alone. When 5 or 6 of the 6 scales agree on direction, it suggests a structural trend visible across a broad range of temporal resolutions.

🔷 DATA ANCHORING

Every structural computation in ST-EP06 — volatility, block means, direction, channel coordinates, state machine transitions, and dashboard narrative — is governed by a single anchoring reference, selected through the Calculation Bar input.

Live Bar mode (default): the anchor is the current forming bar. Values update with each incoming tick. This is standard TradingView behavior and means the indicator may exhibit intra-bar repaint — the live bar's data enters all computations as it evolves.

Close Bar mode: the anchor shifts to the last fully confirmed (closed) bar. The forming bar is excluded from every computation. Values lock once a bar closes and do not change retroactively. This mode is intended for structural analysis, back-testing, and any workflow where historical consistency is a priority.

One deliberate exception is maintained in both modes: the dashboard header always displays the current live closing price (Live Exception protocol), preserving real-time price awareness regardless of how the indicator's structural engine is anchored.

Two modes, same chart moment. In Live Bar the anchor sits on the forming bar, so every value updates tick-by-tick and may repaint within the bar. In Close Bar the anchor shifts to the last closed bar, locking all structural values once the bar closes. The only exception is the dashboard header row, which always displays the live closing price in both modes, so real-time price awareness is never lost.

🔷 YANG-ZHANG VOLATILITY (σ)

The foundation of the ICS is a robust volatility estimate. ST-EP06 uses the Yang-Zhang (2000) realized volatility estimator, an academically established method that combines three variance components:

Overnight variance — capturing the gap between consecutive sessions, measured from the prior close to the current open.

Intraday variance — capturing the movement from open to close within each session.

Range-based variance — using the Rogers-Satchell (1991) estimator, which extracts additional information from the high and low prices without assuming zero drift.

These three components are blended using an optimal weight that is designed to minimize estimation error. The resulting σ updates every bar, adapts to changing market conditions, and — crucially — is drift-invariant: it is intended to remain unbiased whether the market is trending strongly or mean-reverting.

🔷 BLOCK CONSTRUCTION

Rather than analyzing individual bars, ST-EP06 partitions recent price history into consecutive non-overlapping blocks. Each block spans a user-defined number of bars (the Trend Block Period input) and is reduced to a single representative value: the geometric mean of the block's highest high and lowest low, computed in logarithmic space.

This log-midpoint serves as the block's central tendency. Unlike a simple average of closing prices, it captures the structural center of the entire price range within the block, avoiding bias toward any single price point. The number of consecutive blocks compared is controlled by the Trend Block Groups input — more groups means deeper lookback and the ability to detect longer structural trends.

Price history is partitioned into consecutive non-overlapping blocks. Each block reduces to a single log-midpoint — the geometric mean of its highest high and lowest low. Connecting the midpoints forms the representative chain used for trend detection.

🔷 DIRECTION DETECTION + ICS ANGLE

Once blocks are constructed, the engine compares their geometric means in sequence, starting from the most recent. It identifies the longest consecutive segment where each block's central tendency moves in the same direction — either consistently rising or consistently falling. A single reversal terminates the segment.

The slope of this segment is then measured in ICS space: the logarithmic price difference between the oldest and newest blocks in the segment, divided by σ, divided by the number of bars between them. The arctangent of this normalized slope produces the ICS angle in degrees.

If the absolute angle falls within the Range Threshold (a user-configurable dead zone in degrees), the direction is classified as ranging rather than trending. This threshold acts as a sensitivity filter — wider values require steeper moves before declaring a trend, narrower values respond to subtler directional shifts.

An ICS angle of 45° indicates approximately one σ of price movement per bar. An angle near 0° suggests the market may be structurally flat. Because σ adjusts for volatility and the logarithm adjusts for price level, these angles are intended to be directly comparable across any instrument and any timeframe.

🔷 CHANNEL FITTING

Within the identified trending segment, the engine locates four price extremes: the highest high, the lowest high, the highest low, and the lowest low — each paired with its bar position. These four points define two linear boundaries in ICS space.

During an uptrend, the upper boundary is fitted through the lowest high and highest high (capturing the rising ceiling), while the lower boundary is fitted through the lowest low and highest low (capturing the rising floor). During a downtrend, the fitting order reverses to capture descending structure. During a ranging market, the channel uses horizontal boundaries at the segment's absolute high and low.

All boundary computations occur in the σ-normalized logarithmic coordinate system, meaning the channel lines represent geometric (log-linear) paths in price space — curves that naturally follow multiplicative price behavior rather than additive assumptions.

Within the trending segment, four extremes — HH, LH, HL, LL — define two log-linear boundaries. In an uptrend, the upper line fits through LH and HH, the lower through LL and HL. The direction reverses the fitting order for downtrends, and a ranging market uses horizontal boundaries.

🔷 6-SCALE PARALLEL ANALYSIS

A single temporal scale may capture the trend at one resolution but miss structure at others. ST-EP06 runs the complete pipeline — volatility normalization, block construction, direction detection, ICS angle, and channel fitting — independently at six different scales: 3, 7, 13, 19, 29, and 47 bars per block. These values were chosen as prime numbers to minimize harmonic overlap between scales.

Scale 19 serves as the primary engine and maps to the user's Trend Block Period input. The other five scales use fixed periods, providing a structural context that the primary engine alone cannot offer.

The dashboard displays each scale's independent trend direction. A consensus count shows how many of the six scales agree: 5/6 or 6/6 agreement suggests a structural trend that is visible across multiple temporal resolutions, while low agreement may indicate transitional or conflicting structure.

🔷 BREAKOUT / RETEST STATE MACHINE

ST-EP06 includes a 5-state finite automaton that tracks price's structural relationship to the primary channel boundaries:

Inside — price is observed between the channel floor and ceiling. The dashboard shows the position as a percentage: distance from floor and distance to ceiling (summing to 100%).

Breakout Up / Breakout Down — price has exited above the ceiling or below the floor. The dashboard shows the breakout price and the percentage of channel width that price has moved beyond the boundary.

Retest Up / Retest Down — after a breakout, price has moved at least one σ away from the boundary (establishing distance), then returned to test it. The dashboard shows both the original breakout price and the current retest level.

Transitions between states use dynamic σ-based thresholds rather than fixed percentages, meaning the sensitivity automatically adjusts with market volatility. Additional flags track:

✓ Confirmed — a breakout that has been retested and bounced at least one σ away from the boundary.

(gap) — price crossed the entire channel width in a single transition.

Failed breakout — price re-entered the channel after initially breaking out.

Direction reset — the primary trend direction changed, wiping all breakout state.

🔷 VISUAL TOOLS

All chart-overlay elements are drawn from the primary engine (scale 19):

Channel lines — solid upper and lower boundaries from the segment start to the anchor bar, colored by trend direction (configurable up/down/range colors, width, and line style).

Projection lines — dotted forward extension of the channel slopes beyond the anchor bar, providing a visual reference for potential future support and resistance. The projection offset, width, and style are independently configurable.

Channel fill — semi-transparent shading between channel boundaries, with independent color selection and adjustable transparency. Applies to both the solid channel and projection segments.

Diamond markers (◆) — placed at the channel endpoints on the anchor bar. Hovering reveals a tooltip with the anchored close price, ceiling level, floor level, and the price's position as a percentage of channel width.

Direction label — positioned at the midpoint between segment start and projection end. Displays the trend arrow, direction text, and ICS angle (e.g., "▲ UP +7.3°"). Tooltip includes block count.

🔷 DASHBOARD

A compact information table appears at the top-right corner of the chart, organized in 5 rows:

Header — indicator name, ticker symbol, timeframe, and live price (always live under the Live Exception protocol, even in Close Bar mode).

Period — the six scale values (3, 7, 13, user's period, 29, 47) displayed across columns. The primary engine column is highlighted.

Trend — per-scale trend direction with directional arrows (▲ UP, ▼ DN, ◈ RNG) and color coding.

Agreement — consensus count (e.g., "5/6 UP") with the primary channel ceiling (▲) and floor (▼) price levels.

Narrative — a single merged row presenting the breakout/retest state machine output as a human-readable sentence with distance measurements. This row updates dynamically as price interacts with the channel.

All dashboard text, tooltips, and narrative phrases are fully localized.

🔷 ALERT CONDITIONS

ST-EP06 provides 19 alert conditions organized in 5 categories, all gated by a master Enable Alerts toggle:

D · Direction (3 alerts) — fires when the primary engine trend changes to uptrend, downtrend, or range.

B · Breakout (4 alerts) — fires on initial breakout above ceiling or below floor, and separately on confirmed breakout (retested and bounced).

R · Retest (2 alerts) — fires when price returns to test the boundary after establishing distance.

S · Structural (5 alerts) — fires on gap-through events (price crosses entire channel), failed breakouts (price re-enters channel), and direction resets (trend change wipes state).

A · Agreement (5 alerts) — fires when cross-scale consensus reaches significant thresholds: full bullish (6/6), strong bullish (5/6), full bearish (6/6), strong bearish (5/6), or range consensus (≥4/6).

Important: alerts require Calculation Bar = Live Bar. In Close Bar mode, all alert conditions are automatically suppressed and a visual warning is displayed on the chart — because Close Bar mode intentionally lags by one bar, which is semantically incompatible with live alert delivery.

🔷 LANGUAGE SUPPORT

The dashboard, all tooltips, the breakout/retest narrative, and the alert warning label are available in 7 languages:

English · Türkçe · العربية · Русский · Italiano · Português (BR) · 中文

Select the preferred language from the Language dropdown in the Display settings group. All structural and numerical outputs remain unchanged — only the display language of text elements is affected.

🔷 HOW TO USE

Apply ST-EP06 to any chart — the indicator is designed to work across instruments (equities, forex, crypto, commodities, indices) and timeframes without parameter re-optimization, because the ICS framework normalizes for volatility and price level automatically.

Start with the default settings (Period 26, Groups 5, Sigma Length 20) and observe how the channel captures the dominant structural trend. The 6-scale consensus in the dashboard may help assess whether the observed trend is isolated to one temporal resolution or confirmed across multiple scales.

The Calculation Bar setting is a structural decision: use Live Bar for real-time monitoring and alert-driven workflows; use Close Bar for analysis and back-testing where historical stability is prioritized.

The ICS angle on the direction label provides a quantitative measure of trend intensity. Comparing angles across different instruments or timeframes is one of the intended use cases of the ICS framework — a 15° angle on one chart and a 15° angle on another may suggest similar structural momentum relative to each market's own volatility.

The breakout/retest narrative in the dashboard bottom row is designed to provide context-rich status updates without requiring manual chart reading. The σ-based thresholds ensure that breakout sensitivity adapts to current market conditions rather than relying on fixed values.

🔷 SETTINGS

Calculation — Calculation Bar (Live/Close Bar anchoring), Trend Block Period (bars per block), Trend Block Groups (consecutive blocks compared), Range Threshold (ICS dead zone in degrees), Yang-Zhang Sigma Length (volatility lookback).

Channel Lines — Up Color, Down Color, Range Color, Line Width, Line Style.

Projection Lines — Projection Offset (forward bars), Projection Width, Projection Style.

Display — Language (7 options), Show Channel (toggle overlay), Show Fill (toggle shading), Show Dashboard (toggle table), Dashboard Font Size.

Channel Fill — Fill Up Color, Fill Down Color, Fill Range Color, Fill Transparency.

Alerts — Enable Alerts (master toggle, requires Live Bar mode).

🔷 DISCLAIMER

ST-EP06 is an educational and analytical tool. It is designed to provide structural context through σ-normalized trend channels and multi-scale analysis. It does not generate buy or sell signals, does not predict future price movement, and is not intended as financial advice. Historical patterns observed through this indicator do not guarantee future outcomes. All trading decisions remain the sole responsibility of the trader.

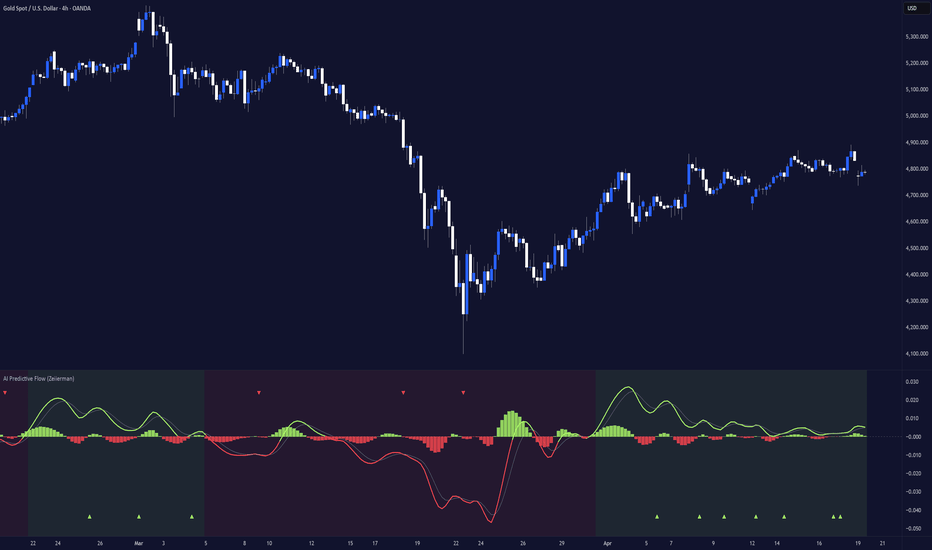

AI Predictive Flow (Zeiierman)█ Overview

AI Predictive Flow (Zeiierman) is a pattern-based oscillator that estimates future price direction by comparing the current market state to similar historical conditions.

Instead of relying on traditional indicators like momentum or moving averages alone, the script builds a multi-feature representation of price behavior and uses a k-Nearest Neighbors (kNN) model to identify past patterns that closely resemble the present.

From those matches, it derives an expected forward return, which is then transformed into a smooth oscillator and a predicted trend regime.

The result is a forward-looking signal that reflects a data-driven expectation based on similar past patterns, not just current price movement.

█ How It Works

⚪ Feature Extraction (Market State Model)

The script converts price into a compact feature set that describes the current market state.

It uses four core features:

Short-term return

Momentum

RSI bias

EMA spread

These are created inside the feature function:

feat(shift, mode) =>

c = close

c1 = close

cm = close

ef = ta.ema(close, fLen)

es = ta.ema(close, sLen)

r = ta.rsi(close, rsiLn)

float v = 0.0

if mode == 1

v := c1 != 0 ? math.log(c / c1) : 0.0

else if mode == 2

v := cm != 0 ? (c - cm) / cm : 0.0

else if mode == 3

v := (r - 50.0) / 50.0

else

v := c != 0 ? (ef - es) / c : 0.0

v

Each feature captures a different dimension of price behavior:

return measures immediate movement

momentum measures directional displacement

RSI bias measures internal pressure

EMA spread measures trend structure

These values are then stacked across multiple bars to form the pattern used for comparison.

⚪ Pattern Memory (Historical Pattern Library)

The script stores rolling sequences of each feature into separate matrices so the current market state can be compared against past states.

That process is built here:

pushFeat(mat, mode) =>

vals = array.new(tot, 0.0)

for i = 0 to tot - 1

array.set(vals, tot - 1 - i, feat(i, mode))

cur = array.slice(vals, tot - len, tot)

old = array.slice(vals, 0, len)

matrix out = matrix.new(1, len, 0.0)

for i = 0 to len - 1

matrix.set(out, 0, i, array.get(cur, i))

hist = array.new(len, 0.0)

for i = 0 to len - 1

array.set(hist, i, array.get(old, i))

if mat.rows() >= mem

mat.remove_row(0)

mat.add_row(mat.rows(), hist)

out

This creates:

a current feature row

a rolling history of prior feature patterns

So rather than comparing single-bar values, the model compares multi-bar pattern structure.

⚪ Pattern Matching Engine (kNN Distance Model)

Once the current feature pattern is built, it is compared to all stored historical patterns.

Distance is measured feature-by-feature across the full pattern length:

getDist(matrix a1, matrix a2, matrix a3, matrix a4, matrix b1, matrix b2, matrix b3, matrix b4) =>

out = array.new(b1.rows(), 0.0)

for i = 0 to b1.rows() - 1

s = 0.0

d1 = a1.diff(b1.submatrix(i, i + 1)).row(0)

d2 = a2.diff(b2.submatrix(i, i + 1)).row(0)

d3 = a3.diff(b3.submatrix(i, i + 1)).row(0)

d4 = a4.diff(b4.submatrix(i, i + 1)).row(0)

for j = 0 to len - 1

s += math.pow(d1.get(j), 2) * 0.25 +

math.pow(d2.get(j), 2) * 0.25 +

math.pow(d3.get(j), 2) * 0.25 +

math.pow(d4.get(j), 2) * 0.25

out.set(i, math.sqrt(s))

out

This produces a similarity score for every stored pattern. A smaller distance means the past setup looked more like the present one.

⚪ Prediction Model (kNN Forward Expectation)

After the distances are ranked, the script selects the nearest neighbors and averages their future outcomes.

The kNN model is implemented here:

knn(dist, n) =>

ix = dist.sort_indices()

useN = math.min(n, ix.size())

sumD = 0.0

avg = 0.0

for i = 0 to useN - 1

sumD += dist.get(ix.get(i))

if useN > 0

for i = 0 to useN - 1

d = dist.get(ix.get(i))

w = useN > 1 ? (sumD != 0 ? (1 - d / sumD) : 1.0) : 1.0

avg += Y.get(ix.get(i)) * w

avg

The forward return used for comparison is defined here:

y := math.log(base) - math.log(base )

This represents the forward return following each historical pattern. The result is a weighted expectation of future movement, not just a reading of current trend.

⚪ Predictive Oscillator

The raw kNN prediction is smoothed and transformed into the main oscillator and signal line.

pred_ = ta.ema(pred, smth)

if not na(pred)

predSm := smth > 1 ? pred_ : pred

osc = ta.ema(predSm, oscLn)

sig = ta.ema(osc, sigLn)

hist = osc - sig

This creates:

Oscillator = smoothed expected return

Signal line = secondary smoothing for crossover confirmation

Histogram = distance between oscillator and signal

⚪ Predicted Trend Regime

Beyond the oscillator, the script also builds a broader trend regime using the predicted price path.

First, the raw prediction is converted into a projected price line:

predLine := base + base * (math.exp(pred) - 1)

Then a regime band is created using ATR:

hiRef = predLine + bandM * atr

loRef = predLine - bandM * atr

if ta.highest(hiRef, regLn) == hiRef

trendUp := true

if ta.lowest(loRef, regLn) == loRef

trendUp := false

This background state represents:

bullish predicted regime when the projected path is pressing into new highs

bearish predicted regime when the projected path is pressing into new lows

So the background is not showing the raw price trend. It is showing the model’s predicted regime bias.

█ How to Use

⚪ Read the Oscillator

Above 0 → bullish expectation

Below 0 → bearish expectation

Near 0 → neutral/low conviction

Far from 0 → strong directional push

Use crossovers for entry timing:

Bullish crossover → potential upward continuation

Bearish crossover → potential downward continuation

⚪ Use the Predicted Trend Regime

The background highlights the model’s broader directional bias:

Green → predicted bullish regime

Red → predicted bearish regime

Regime shifts often indicate:

early trend transitions

continuation confirmation

structural changes in expectation

⚪ Combine Signals

Best use comes from alignment:

Oscillator above zero + bullish regime + signal → strong continuation bias

Oscillator below zero + bearish regime + signal → strong downside bias

Divergence between the two → caution / mixed signals

█ Settings

Pattern Length – Controls how many bars define the current pattern. Higher values capture more structure, lower values increase responsiveness.

Memory Size – Number of historical patterns stored for comparison. Larger values improve context but increase computation.

Neighbors (k) – Number of closest matches used in prediction. Lower values are more reactive, higher values are smoother.

Prediction Smoothing – EMA smoothing applied to the raw prediction. Reduces noise at the cost of lag.

Signal Length – Smoothing of the signal line used for crossover signals.

-----------------

Disclaimer

The content provided in my scripts, indicators, ideas, algorithms, and systems is for educational and informational purposes only. It does not constitute financial advice, investment recommendations, or a solicitation to buy or sell any financial instruments. I will not accept liability for any loss or damage, including without limitation any loss of profit, which may arise directly or indirectly from the use of or reliance on such information.

All investments involve risk, and the past performance of a security, industry, sector, market, financial product, trading strategy, backtest, or individual's trading does not guarantee future results or returns. Investors are fully responsible for any investment decisions they make. Such decisions should be based solely on an evaluation of their financial circumstances, investment objectives, risk tolerance, and liquidity needs.

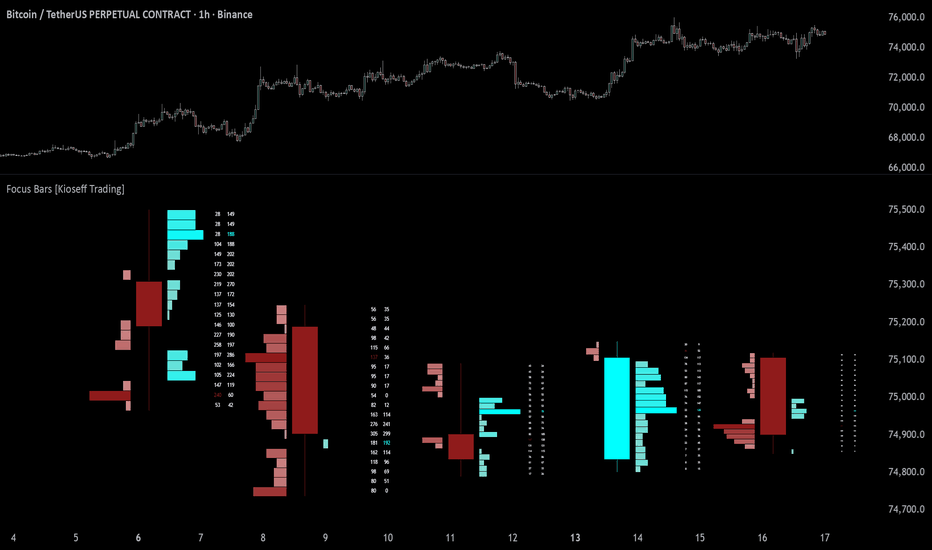

Focus Bars [Kioseff Trading]Hello Traders!

🔹 Focus Bars

Focus Bars is a lower-timeframe reconstruction tool designed to break each candle into a price-based internal structure .

Instead of viewing a bar as a single OHLC print, this tool redistributes intrabar participation across price levels, showing where activity, delta, and directional pressure concentrated inside the bar itself .

Think of it as a way to look inside the candle .

intrabar participation distributed by price level

buy vs sell pressure mapped inside each bar

delta-driven visualization of internal structure

volume-based or delta-based profile sizing

stacked recent bars for direct comparison

lower timeframe reconstruction of candle internals (up to 1 tick)

🔹 What the tool shows

🔸 Focus Bar Structure

Each visible bar is reconstructed using lower timeframe data and divided into configurable price rows.

This allows the script to build an internal map of activity inside the candle, showing how participation distributed throughout its range.

This helps reveal:

where activity concentrated inside the bar

which price regions attracted the most interaction

how the bar built from low to high

🔸 Directional participation

The script estimates directional pressure using lower timeframe price movement and distributes that pressure across the bar’s traded range.

This allows you to observe:

where buying pressure was strongest

where selling pressure dominated

how directional activity distributed through the candle

Instead of treating the candle as one net result, Focus Bars breaks it into a layered participation structure .

🔸 Volume mode

In its default form, the profile width reflects total intrabar participation at each price level.

This helps identify:

high activity zones inside the bar

areas where the market spent more effort

internal high-interest regions

This mode focuses on where the bar traded most actively , regardless of which side was dominant.

🔸 Delta Bars mode

When Delta Bars mode is enabled, the visualization shifts from general activity to directional imbalance .

Positive delta levels extend one way, while negative delta levels extend the other, helping expose where directional pressure accumulated inside the bar.

This makes it easier to see:

which prices were dominated by buyers

which prices were dominated by sellers

where internal imbalance became most extreme

This mode is about pressure and imbalance , not just participation.

🔸 Recent bar stacking

The script displays multiple recent reconstructed bars side by side, allowing you to compare internal structure across the most recent candles.

This helps reveal:

whether participation is shifting higher or lower

whether recent bars are building similarly or differently

how internal pressure changes from one bar to the next

Rather than looking at candles in isolation, you get a stacked structural view of recent bar development.

🔸 Price-row resolution

Each bar is divided into a configurable number of rows.

Higher row counts provide finer structural detail, while lower row counts simplify the visualization.

This lets you control the balance between:

detail

clarity

performance

🔸 Lower timeframe reconstruction

The script uses lower timeframe data to estimate how participation distributed through each candle.

Granularity can be selected between:

1-minute

1-second

1-tick

This allows the internal structure to become more detailed as lower granularity data becomes available.

🔸 Buy / sell volume labels

Each price row includes separate displayed values for:

sell-side participation

buy-side participation

This gives a direct read on how activity distributed at each level, rather than relying only on color or profile width.

🔸 Gradient-based intensity

Color gradients help represent the magnitude of participation and directional pressure at each price level.

This makes it easier to spot:

high-intensity zones

low-interest areas

strong directional concentrations

Stronger color intensity reflects stronger internal participation or imbalance.

🔹 How to read it

Each component gives a different layer of information:

Candle body / wick → the outer structure of the bar

Profile width → where participation concentrated

Delta mode → where directional imbalance built

Buy / sell labels → how each side contributed at a level

Stacking → how internal structure changes bar to bar

🔹 Why this tool is useful

It gives you:

a way to look inside candles instead of only at candle outcomes

price-based intrabar participation mapping

clear visualization of internal volume and delta structure

context for where buying or selling pressure concentrated

a deeper structural view of recent bar development

🔹 Best use cases

analyzing internal candle structure

comparing recent bars side by side

spotting hidden participation concentrations

finding where directional pressure built inside a move

adding lower-timeframe context to bar-by-bar analysis

🔹 Important note

This tool uses lower timeframe data to reconstruct intrabar structure.

This means:

it is an approximation of internal order flow

accuracy depends on available lower timeframe data

selected granularity impacts precision

different symbols and data feeds may produce different levels of detail

🔹 Inputs you can customize

The script includes flexible controls such as:

granularity selection

bar count to display

row resolution

volume mode vs Delta Bars mode

color customization

display offset

Closing Notes

Focus Bars is built to shift the focus from how a candle finished to how it developed internally .

It helps reveal not just what the bar looked like from the outside, but where participation and pressure were concentrated inside it .

Thank you for checking it out!

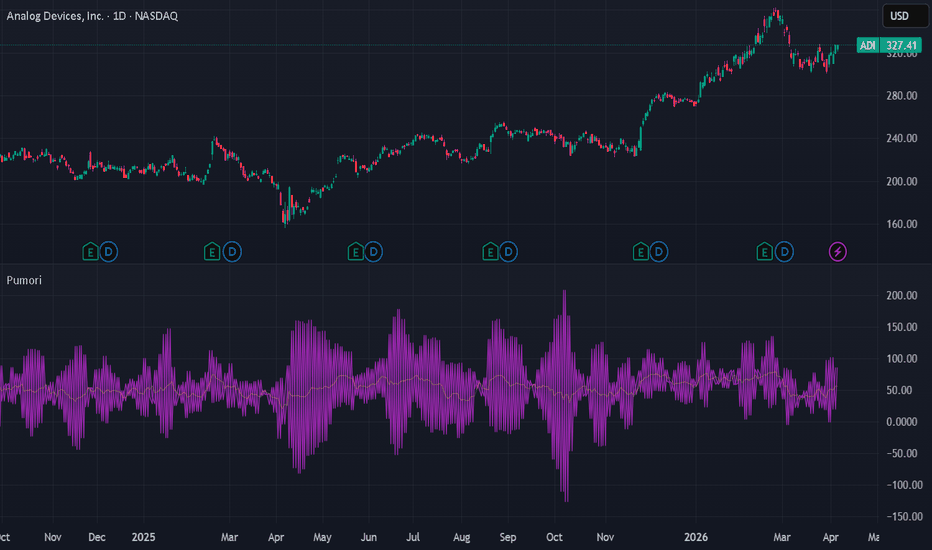

Carrier Volatility [Pumori]Carrier Volatility

This is the foundational Pulse component of the ET Massif Framework research suite.

Description

Pumori is a high-resolution volatility and impulse response tool built around an ultra-short fractional length (0.1 EMA). It is a high-frequency carrier framework that exposes the formation of volatility through controlled instability rather than smoothing. Unlike traditional indicators that smooth or lag volatility, Pumori captures high-frequency energy, allowing volatility to be observed in near real-time as it forms.

Construct

At its core, Pumori uses:

Dual 0.1-length EMA

A sub-unit length (N < 1) is intentionally used to produce an anti-smoothing response, where the recursive term overreacts to incoming data and amplifies micro-movements. The EMA is applied twice recursively, producing a controlled oscillatory response. This interaction forms the carrier layer, where continuous oscillation exposes high-frequency volatility directly.

Flexible source input (RSI, RSI SMA, close, custom)

Three default source modes are available, allowing Pumori to operate across different domains. RSI is set as the default carrier as it represents normalized momentum in a bounded range, providing a stable domain for the transform. The chosen source defines how the carrier behaves and directly influences stability, noise profile, and interpretability.

Volatility Envelope

The recursive overshoot–correction cycle forces continuous oscillation around the source, forming a dynamic envelope that expands and contracts with volatility. Pumori does not measure volatility, it reveals volatility formation.

Think of Pumori like an AM radio carrier wave. The point is not signal transmission — it is that the carrier must operate at a high enough frequency for changes to become visible immediately. Most traditional volatility measures operate over a fixed lookback window, which makes them inherently lagging. Pumori Instead, it allows volatility to express immediately. The 0.1 EMA acts as a high-frequency baseline upon which expansion and contraction are directly observed.

Modes

Default

RSI is the default baseline configuration. because it provides a bounded and naturally oscillatory structure (0–100), allowing the carrier to behave in a stable and interpretable manner. Unlike price, which can expand unpredictably, RSI compresses extremes and standardizes movement, making volatility expansion and contraction easier to observe. The result is a clean carrier waveform that offers the best balance between responsiveness and readability.